Patrick Brown of Stanford University in California, working with Ken Caldeira, has found that the planet will warm 10 to 20 per cent more than previously thought. Here is the critical graph from their research:

The IPCC usually averages out all available models, which gives a wide range of uncertainty. Brown and Caldeira wanted to narrow the uncertainty so they chose only those models whose projections to date best matched real-world data on measures that matter.

- They chose several measures: for instance, how much heat is escaping from the top of Earth’s atmosphere. This is a direct measure of how much the total heat content of the sea, air and land surface is changing. Models that handle this well should be better at forecasting long-term temperature change.

“Warming is fundamentally a result of these radiation changes,” says Brown.

So:

- Once Brown had picked the best-performing models, he found they tend to project more warming (Nature, DOI: 10.1038/nature24672). “The models that warm the most are also the ones doing the best right now,” he says.

For instance, for a scenario called RCP8.5 in which emissions continue unabated, the models used by the Intergovernmental Panel on Climate Change project an average of 4.3°C warming by 2081 to 2100, plus or minus 0.7°C. But the best-performing models project 4.8°C, plus or minus 0.4°C.

For a scenario that assumes more action is taken to combat climate change, called RCP6, the IPCC models project 2.8°C on average, compared with 3.2°C for the best models. The world is currently on a course somewhere between these two scenarios.

No-one can be sure that the new research is right – Brown says it may underestimate warming. Much of the variance related to the presumed cooling effect of clouds and how cloudy it is going to be as the earth warms. It appears that the evidence favours clouds not having the cooling effect some models assume.

So far I’ve been quoting from an article by Michael Le Page in the New Scientist, which is bound to be pay-walled.

There is a longer explanation at Patrick Brown’s site. This quote is a worry:

-

Secondly, the range of modeled global warming projections for a given change in radiative forcing does not represent the true full uncertainty. This is because there are a finite number of models, they are not comprehensive, and they do not sample the full uncertainty space of various physical processes. For example, a rapid nonlinear melting of the Greenland and Antarctic ice sheets has some plausibility (e.g., Hansen et al. 2016) but this is not represented in any of the models studied here and thus it has an effective probability of zero in both the raw unconstrained and observationally-informed projections. Because of considerations like this, the raw model spread is best thought of as a lower bound on total uncertainty (Caldwell et al., 2016) and thus our observationally-informed spread represents a reduction in this lower bound rather than a reduction in the upper bound.

In short the models pay no attention to ice sheet decay or other longer-term factors that Hansen has been telling us about for the longest time.

Then from Carnegie Science we have this from Caldeira:

-

“Our study indicates that if emissions follow a commonly used business-as-usual scenario, there is a 93 percent chance that global warming will exceed 4 degrees Celsius (7.2 degrees Fahrenheit) by the end of this century. Previous studies had put this likelihood at 62 percent.”

The Washington Post consulted other scientists, who were not fully convinced. This statement is important:

“It’s great that people are doing this well and we should continue to do this kind of work — it’s an important complement to assessments of sensitivity from other methods,” added Gavin Schmidt, who heads NASA’s Goddard Institute for Space Studies. “But we should always remember that it’s the consilience of evidence in such a complex area that usually gives you robust predictions.”

Schmidt noted future models might make this current finding disappear — and also noted the increase in warming in the better models found in the study was relatively small.

The New Scientist links to an article that looked at all the current work analytically and took some account of longer term factors. I’ll just quote the last paragraph:

- That means we need to get our act together and cut emissions now, says Shindell. If we don’t, warming could accelerate rapidly in 20 or 30 years as countries in Asia cut aerosol emissions. A combination of low aerosols, high sensitivity and high CO2 emissions could lead to global temperatures rising by as much as 5°C or 6°C by 2100.

That was March 2014, and it cited work by Gavin Schmidt among others.

Then on 31 October this year, the New Scientist has another article on carbon in soils. The models assume that there will be an uptake of carbon by growth in warmer soils. A study by Charles Koven of the Lawrence Berkeley National Laboratory in California and colleagues found that there will instead be a loss, quite a big one, and that’s just from the top metre, they did not look at deep methane.

-

At present, the soil models used to inform climate models suggest that in high latitudes the growth effect will win out, and carbon storage will increase. But they are wrong, according to a study by Charles Koven … and colleagues.

“Our work shows a net loss instead,” says Koven. “A quite large one.”

They found:

- There’s more bad news on the climate front: soils in cold climates could release far more carbon than expected as the world warms.

For every half a degree Celsius of warming, this extra carbon could be roughly equivalent to roughly one year’s emissions from all human sources. That makes the task of limiting warming to 2°C even harder…

Assuming two degrees is a sensible limit, which it isn’t.

It’s tricky measuring the net effect on soils all over the world. However, we can’t just wait for the science to become settled and reach consilience, as the Washington Post suggests.

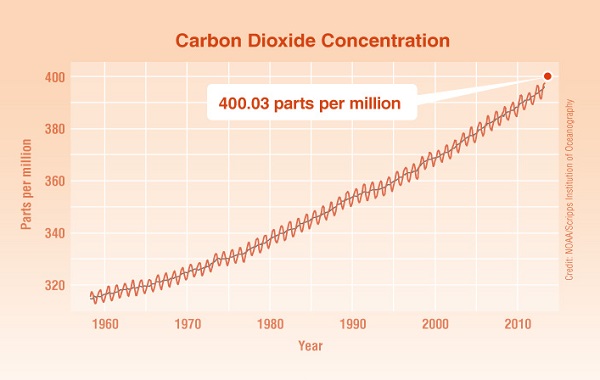

I remind people that in the place it matters, actual emissions in the atmosphere, this is how we are tracking according to the WMO via NASA:

A child born now will be 83 by 2100.

Maybe as young as 13 when the 2 deg limit is reached.

John, it’s not a happy prospect. In may ways we have lived in the best of times The sad fact is that 4°C is generally held to be incompatible with civilisation as we know it.

Emmanuel Macron says the world is losing the fight against climate change: ‘We’re not moving quick enough’

In truth, however, neither he nor any of his peers are taking the situation seriously enough.

No, not toast.

Primarily rock, much of it molten; with a thin smear of sundry biota near sea level.

Average planet near average star; nothing special.